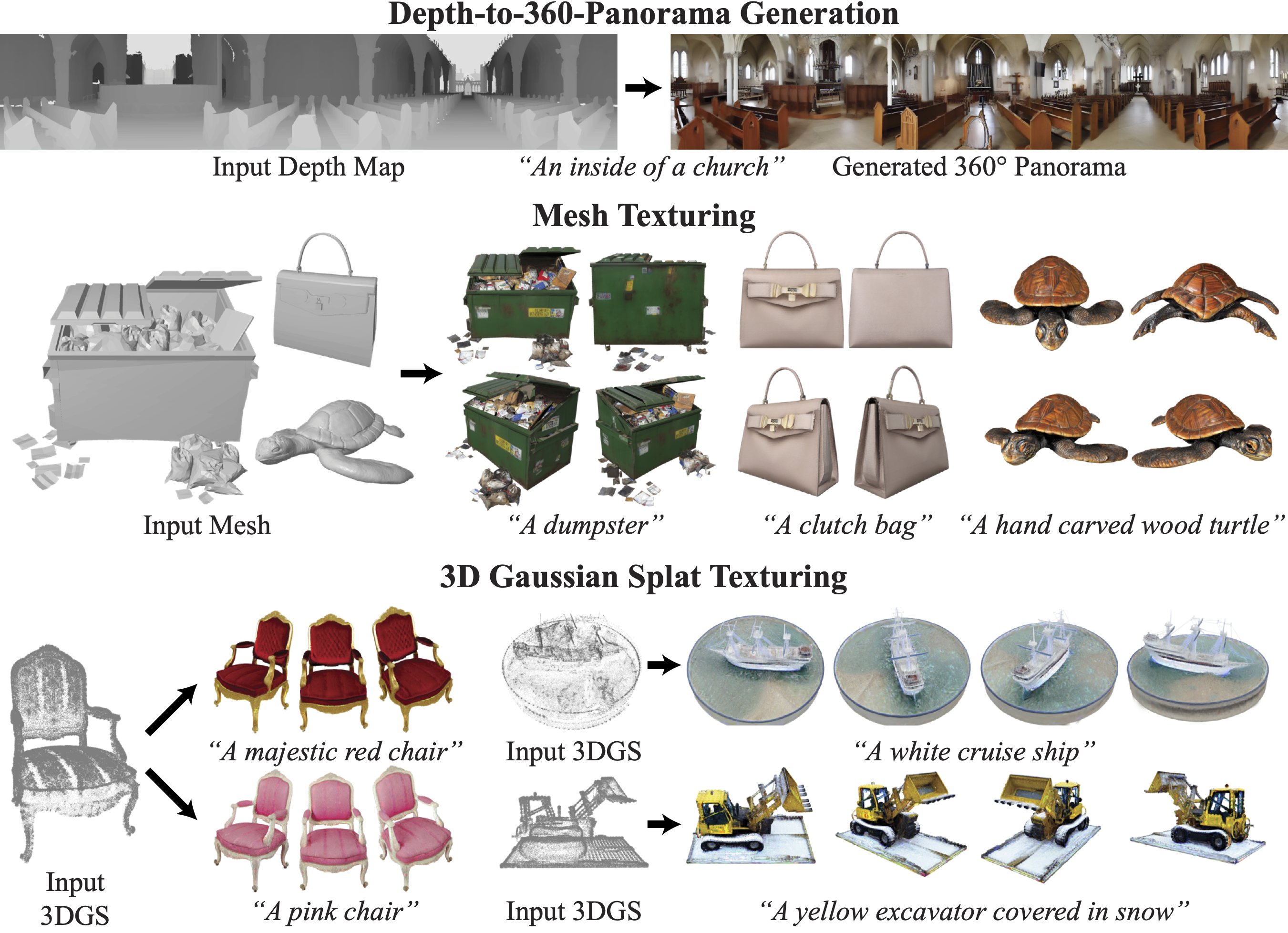

We introduce a general diffusion synchronization framework for generating diverse visual content, including ambiguous images, panorama images, 3D mesh textures, and 3D Gaussian splats textures, using a pretrained image diffusion model. We first present an analysis of various scenarios for synchronizing multiple diffusion processes through a canonical space. Based on the analysis, we introduce a novel synchronized diffusion method, SyncTweedies, which averages the outputs of Tweedie’s formula while conducting denoising in multiple instance spaces. Compared to previous work that achieves synchronization through finetuning, SyncTweedies is a zero-shot method that does not require any finetuning, preserving the rich prior of diffusion models trained on Internet-scale image datasets without overfitting to specific domains. We verify that SyncTweedies offers the broadest applicability to diverse applications and superior performance compared to the previous state-of-the-art for each application.

"A dumpster"

"A clutch bag"

"A lemon"

"A hand carved wood turtle"

"A nascar"

"A hamburger"

"An hourglass"

"A jeep"

"A turtle"

➡

"A golden statue

of a turtle"

"A car"

➡

"A luxurious

red sports car"

"A lantern"

➡

"A chinese style lantern"

"A nascar"

➡

"A car with graffiti"

"An elephant"

➡

"An african elephant"

"An axe"

➡

"A wooden axe"

"A majestic red chair"

"A photo of cucumbers"

"A photo of a yellow excavator covered in snow"

"A photo of a white cruise ship at sea"

"A leather chair"

"A photo of corns"

"A white drum kit"

"A photo of a pirate ship at sea"

"A photo of a mountain range at twilight"

"A photo of a beautiful ocean with coral reef"

"A photo of a lake under the northern lights"

"A house at night"

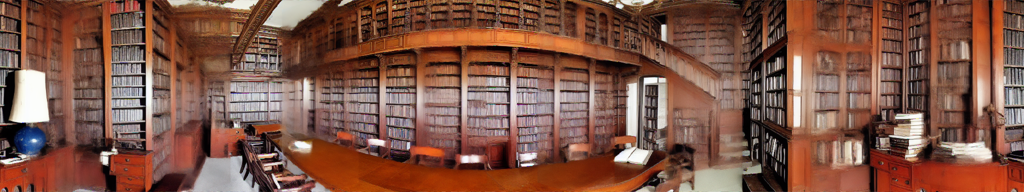

"An old looking library"

"A room that has been painted gold"

Case1

Case2

(SyncTweedies)

Case3

Case4

Case5

Paint-it

TEXTure

Text2Tex

"Baseball glove"

Case1

Case2

(SyncTweedies)

Case3

Case4

Case5

Paint-it

TEXTure

Text2Tex

"Minivan"

Case1

Case2

(SyncTweedies)

Case3

Case4

Case5

Paint-it

TEXTure

Text2Tex

"iPod"

Case1

Case2

(SyncTweedies)

Case3

Case4

Case5

Paint-it

TEXTure

Text2Tex

"Pigeon"

Case2

(SyncTweedies)

Case5

SDS

IN2N

"A photo of a tree with multicolored leaves"

Case2

(SyncTweedies)

Case5

SDS

IN2N

"A photo of a wooden carving of a microphone"

Case2

(SyncTweedies)

Case5

SDS

IN2N

"A photo of an intricate wooden carving of a ship"

Case2

(SyncTweedies)

Case5

SDS

IN2N

"A photo of a purple chair"

Case2

(SyncTweedies)

Case5

SDS

IN2N

"A photo of carrots"

Case2

(SyncTweedies)

Case5

SDS

IN2N

"A photo of a tree covered in snow"

@article{Kim2024SyncTweedies,

title = {SyncTweedies: A General Generative Framework Based on Synchronized Diffusions},

author = {Kim, Jaihoon and Koo, Juil and Yeo, Kyeongmin and Sung, Minhyuk},

year = {2024},

journal = {arXiv:2403.14370},

}